The Digital Economy of Disinformation on the Darknet: Controlling the Narrative

As we introduced in our previous blog post, DarkOwl analysts have observed a now well-established digital economy in the darknet around the trade of social media accounts and its influencers – accounts sold in bulk that could be easily leveraged for a dis- or mis-information campaign by a foreign government or agency with malicious intention.

In this blog, we look at how the darknet is rife with “disinformation as a service” type offerings, and how technology such as blockchain is now being leveraged to persistently disseminate false narratives to the public.

Clarifying the meaning of “disinformation campaigns”

Put simply, a disinformation campaign is a psychological operation to manipulate a target’s perception regarding select topics using strategic methods to disseminate false and half-truths via various media mediums. Usually, these campaigns are multifaceted and comprehensive, using a mix of Social Media account activity and illegitimate news publications in which disinformation can be disguised in a highly sophisticated and believable fashion.

CONSENSUS CRACK DEVELOPMENT

Social media continues to be a powerful tool for conducting disinformation campaigns, especially since access to large quantities of pre-verified, fake social media accounts continue to be readily available for purchase on the darknet. By having agency over large volumes of fake social media accounts, perpetrators are able to facilitate what the historical COINTEL “Gentleman’s Guide to Forum Spies” calls, Consensus Crack Development. This is a disinformation tactic in which agents under the guise of a fake account make claims in a post on social media or forum which appears legitimate, towards some objective truth, but has a generally weak premise without substantive proof to back the claim of the post.

Once content has been posted/stated as truth, alternative fake accounts also under the agent’s control post comments both agreeing and disagreeing, presenting both viewpoints initially, and the dialogue between the fake accounts continue until the intended consensus is solidified.

Disinformation as a Service: a darknet exclusive

The darknet is a known playground for disinformation campaigns and its users are fairly wise to detecting disinformation, especially across anonymous image boards where a number of controversial groups like Qanon participate. One anonymous user on endchan advised, “don’t be fooled by disinformation, they almost always use truth but wrap it in disinformation,” noting the prevalence of outrageous conspiracy theories historically across the internet.

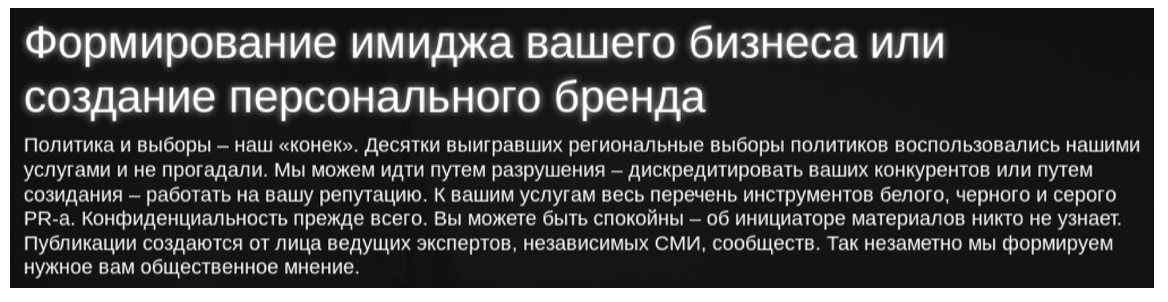

Of more concern is DarkOwl’s discovery of a number of Ukrainian and Russian-speaking disinformation-as-a-service providers across the darknet with a considerable footprint for information-manipulation related offers and discussions.

While most service providers’ advertisements read like a commercial mass media company, specializing in promoting the brand and image of a person or business, these providers solicit customers on cybercrime focused darknet forums, where the skills for online branding and mass marketing are leveraged for malicious intention, such as the demise of competitors’ brand and subsequent reputation.

To illustrate how these disinformation services are structured and advertised, we’ve put together a brief profile for three different vendors who are profiting in this space.

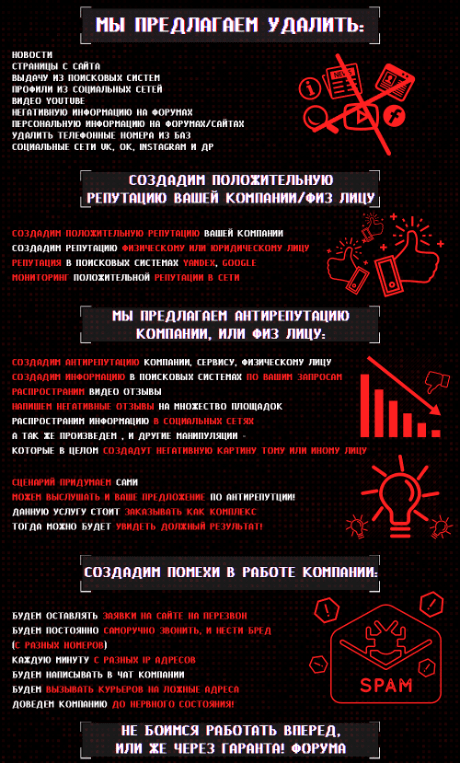

DARKNET VENDOR A: A SAMPLE MENU OF DISINFORMATION AND REPUTATION INFLUENCE OFFERINGS

One noteworthy disinformation-as-a-service provider also markets both reputation promotion and destruction services. English translations of the offerings on their brochure read:

We are offering to erase:

-

News

-

Pages from websites

-

Results from search engines

-

YouTube videos

-

Negative comments on forums

-

Personal information on forums

-

Telephone numbers from databases

-

Social media profiles (OK.ru, VK, Instagram & others)

We will create positive reputation for a company or identity. We can:

-

Create a positive reputation for a company

-

Create a positive reputation for individuals

-

Improve reputation for search engines such as Yandex & Google

-

Provide reputation monitoring across the web

We are offering anti-reputation services for a company or identity. We can:

-

Create anti-reputation for a company, service or individual

-

Create and post negative content and optimize it for search engines

-

Post negative reviews and write negative comments on social media

-

Create multiple negative narratives and experiences to legitimize the claims

-

We will orchestrate the story (theatre) and can listen to your suggestions regarding anti-reputation

-

This type of service is more complex and offered as a package for sale for results (and needed outcome)

We can create disruptions to the daily operations of a company. We can:

-

Spam them by flooding them with questions on their site to contact them

-

Continuously call the company from various phone lines and speak nonsense

-

Every minute from different IP addresses

-

Harass via website chat bots -send delivery companies fake addresses

-

We will take the company where it started!

DARKNET VENDOR B: A SPECIALIST IN WHATSAPP CAMPAIGNS

Another reputable vendor on a popular Russian underground forum offers targeted customized messaging via WhatsApp, mass social media information management, via credible social media accounts on OK.ru, Facebook and Instagram in bulk, as well as content removal from search engines using targeted critical search engine optimization (SEO).

Their offer describes their automated social media services as a “a network of controlled biorobots that can convey to the masses any information you need.”

In the summer 2018, WhatsApp messages widely circulated in rural Indian communities were the cause of a number of violent mob-lynchings where strangers were attacked and wrongly accused of child kidnappings. WhatsApp countered the disinformation-sparked violence by limiting the number of times a message could be forwarded and the size of WhatsApp groups. (Source)

DARKNET VENDOR C: A PIONEER IN USING BLOCKCHAIN TECHNOLOGY TO PROPAGATE DISINFORMATION CONTENT ACROSS THE INTERNET

“Information without the possibility of being deleted” - Blockchain is now being leveraged to conduct persistent disinformation campaigns

Another notable vendor states that they employ a “blockchain-based botnet” to conduct persistent disinformation campaigns. DarkOwl analysts assess that this vendor has been active across many of the key Russian and Ukrainian-speaking darknet forums for several years and in late 2019 debuted a commercial enterprise around their public relation services, listing their partnerships with leading mass media across Russia, CIS, Europe and the USA and political campaigns and elections as some of their specialties.

The vendor, who submits their forum posts primarily in Ukrainian, marketed their blockchain based approach by stating in an advertisement earlier this year that they can offer “information without the possibility of deletion. The vendor further stated that by utilizing their services and executing a disinformation program based on the blockchain, they are able to prevent the deletion of content for either the promotion of a business or the “funeral” of a competitor.

As of early 2020, the vendor offered such services for $500 USD for promotion or $700 for competitor disinformation.

After more targeted conversations and technical research on their approach, DarkOwl’s analysts discovered using the blockchain for on-chain data storage is not-only reliably secure, but potentially turns the blockchain into a politically and architecturally decentralized ‘cloud’ for data preservation and persistence.

Blockchain data storage technology uses the BitTorrent protocol, breaking up the files into individual transactions or 1MB segments for Bitcoin (i.e. blocks) and stores them across multiple instances, preserving the content contained therein as information on the blockchain cannot be modified. Blockchain data storage works best with smaller sized files, as consistent with a modern HTML/CSS website where video files and media may be more cost-prohibitive. For security purposes, the vendor did not specify which blockchain (Bitcoin, Ethereum) they prefer for their disinformation botnet.

NOTE: A popular darknet news source speaks of a Politico report from 2019 of Volodymyr Zelenskyy’s controversial election and how Facebook struggled to contain disinformation’s spread. Vendor C claims their services were instrumental in the social media disinformation circulated around the 2019 Ukrainian Presidential Election. According to the report, one Facebook account with the most influence had over 100,000 followers and ran a video claiming (Zelenskyy, Presidential candidate at the time) would allow Russia to take over the country with a violent military operation.Source DarkOwl Vision: 30e9408d811ba5bbbf3c10b809da6107

A More Subtle and Simple Disinformation Technique: URL hijacking

Aside from content creation and social media manipulation, doxing and disseminating information in mass, DarkOwl’s partner, CyberDefenses, Inc. has recently also uncovered a number of state and local election-related domains where criminals leverage URL hijacking and typo squatting to manipulate the narrative of the original source. Disinformation agents register a fake domain, spelling the domain name similar to the original, often simply swapping an uppercase “I” (pronounced ‘eye’) instead of a lowercase “l” (L), copy and replicate the exact website design color scheme and HTML/CSS layout as the original, but change extremely subtle content, such as a single campaign policy or contact information to misinform and misdirect the malicious website visitors and potential voters for that candidate.

Depending on the efficacy of the malicious copy website’s SEO, the fake domain can sometimes emerge ahead of the original in popular search engine results for related keywords. URL hijacking can cause subtle election interference that can easily go undetected.

Other times, disinformation actors don’t even bother to use the darknet to sell their disinformation-as-a-service offerings. This happens most often in the context of financially-motivated actors who create disinformation or other sensationalist content in order to drive clicks to their ad-supported websites. DarkOwl recently spoke to cyber threat investigation company Nisos regarding their research into domains created in the North Macedonian town of Veles, which became famous during the 2016 US election cycle for US-focused disinformation created purely for financial motivations.

Nisos found that while there were indeed a number of the more than 1000 active domains created in Veles that still focused on US politics, there were an even greater number hosting sensationalist health-related content, suggesting that health-related disinformation was likely more lucrative than political disinformation. Nisos also uncovered an extensive curriculum offered by an enterprising local web developer that provided detailed training regarding how to monetize such domains and market them on social media platforms.

Nisos’s findings suggest that while the focus on disinformation as an election threat may diminish after the 2020 US election cycle, disinformation actors will probably still deploy the disinformation tactics learned in political campaigns to spread disinformation for financial gain on topics of perennial interest such as health issues, gossip news, and other tabloid topics.

Financially motivated actors will hone tactics and techniques in between election cycles that may fly below the radar of election-focused disinformation watchers. Yet because they are constantly evolving their tactics as a result of the cat-and-mouse game of evading detection by internet companies, these actors may resurface during the next major election cycle using tactics that are unrecognizable to researchers who are accustomed to the 2020 version of disinformation actor tactics. “Pay attention to the ones doing it for money” says Nisos researcher Matt Brock. “There will be a Darwinian selection process that will occur largely below the radar of disinformation researchers currently focused on threats to election integrity, but the tactics of the fittest financially-motivated survivors will likely spread to the next generation of ideologically-motivated disinformation actors in ways that we will miss if we’re not paying attention now.”

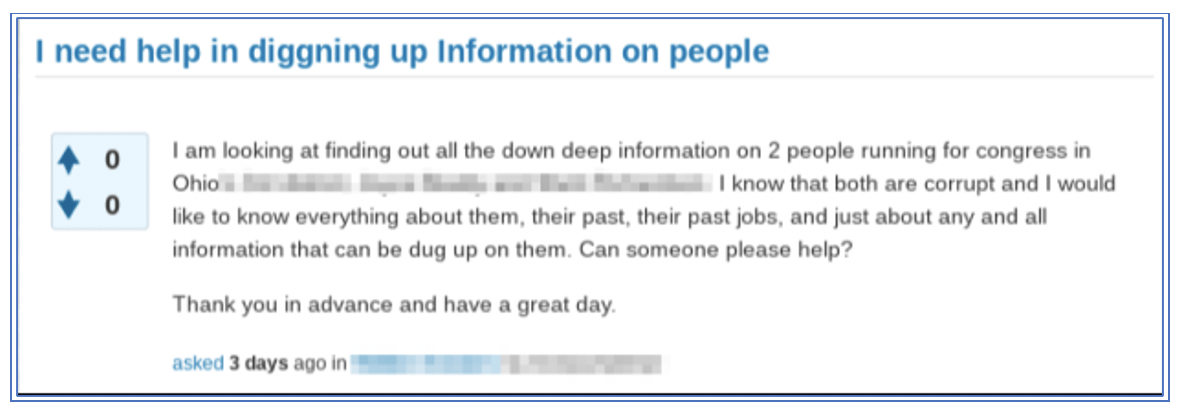

Also on the Darknet: Personal Forensics & Dirt Digging

Given the popularity of doxing services on the darknet, underground forums are also a popular resource for finding help in uncovering dirt on competitors and political candidate rivals. Earlier this month, one anonymous user on a darknet forum, reached out openly in the public thread asking for help “digging up information on people” specifying two US Congressional candidates by name they were interested in. DarkOwl was unable to confirm whether this user’s request for assistance was satiated.

Election Disinformation Warnings Prominent

The U.S. government and its intelligence community of agencies publicly acknowledge the active dissemination of, and subsequent impacts caused by sharing, misleading information up until the election date and the days immediately thereafter. In recent weeks, both the CIA and FBI have published warnings in relation to foreign actors spreading disinformation around the imminent 2020 Presidential Election with the intention to discredit the elections’ legitimacy warning the public on sharing online content across social media networks. (Source)

Anonymous networks with digital markets, forums, and image boards, facilitate the spread of such misinformation as apparent with the volume of tools and services on offer, and the number of criminal actors prominent in these sinister underground communities. In 2018, an internal, for-official-use-only, article prepared by the Department of Homeland Security that was subsequently leaked on the darknet indicated that the US government has been fully aware of customizable tools available for sale on the dark web that could “enhance a malign influence operation aimed at interfering with the 2018 US midterm elections by creating a seemingly legitimate and professionally made graphics displaying falsified election results.”

DarkOwl’s Vision system successfully captured the 2018 advertisement, submitted by an anonymous user of the darknet forum with over 10 years forum experience, along with the product’s description detailing the broadcast. Similar offers for Election Night 2020 templates have been spotted, but their proliferation has not been ascertained.

(English Translation of original post)

"Election Night 2018" is a fully customizable template that contains everything you need to create a great, bright video dedicated to the election. "Election Night 2018" is incredibly easy to set up, so you can create a professional broadcast show in a very short time, regardless of whether you are creating a show for the presidential election or Federal and regional.”

– Source, DarkOwl Vision: be1fe1114d27b9ab9fd262ca43e4dcf0

Earlier in 2020, the U.S. State Department utilized its “Rewards for Justice” program to solicit any tips from residents of known Eastern-block countries (Russia, Ukraine, Belarus) that could potentially assist authorities prevent possible digital election interference.

Russian-speaking users on a darknet forum, popular for cyber-crime coordination and malware trading, discussed the U.S. diplomats’ targeted request for information in detail, stating it was sent via bulk SMS text-message to residents of Saratov, Krasnodar, Vladivostok, Ulyanovsk, Chelyabinsk, Perm and Tyumen in Russia. One user suggested they should absurdly exploit the program by hiring a random homeless person to pretend to be a KGB or Fancy Bear sponsored hacker, equipping them with a laptop with hacker-like toolkits installed and signs with potential information the department would pay for.

A New Age of Disinformation: State Sponsored Propaganda to Conspiracy Theories

The concept of information operations via state-sponsored propaganda campaigns is hardly novel, but the lack of internet moderation and a mass transition into social media and digital dependent age, especially over the last two decades, has amplified the proliferation of disinformation in mass, especially as related to particular geo-political agendas and mass social ideology construction. Society’s lack of media literacy and critical thinking skills outside one’s personal area of expertise compounds the complexities of navigating the seas of digital propaganda.

In August, the U.S. Department of State Global Engagement Center (GEC) issued a Special Report outlining the Pillars of Russia’s Disinformation and Propaganda Ecosystem that details the complex information network of official government communications, state-funded global messaging, proxy resources, weaponized social media and cyber-enabled disinformation used by the Russian government in its global information operations campaigns.

Notably, the U.S. State Department report highlighted forgeries and cloned websites (URL hijacking) – consistent with DarkOwl and CyberDefenses’ observed research – as key cyber-enabled disinformation methods used by the Russian government.

A key take-away from their report is how a multi-faceted information ecosystem “allows for the introduction of numerous variations of the same false narratives” an approach consistent with the saying “Repeat a lie often enough and it becomes the truth“, assessed as the principle law of propaganda historically attributed to Nazi Germany’s Minister of Propaganda, Joseph Goebbels. This was witnessed most recently with the height of the COVID-19 pandemic where at least four global, “independent” news outlets: Global Research, SouthFront, New Eastern Outlook, and Strategic Culture Foundation – assessed by the GEC as “Kremlin-aligned disinformation proxies” – circulated hundreds of articles stating COVID-19 was a U.S. sponsored bio-weapon deployed against China, including defamation of Bill Gates and the CIA’s involvement. The proxies’ website and social media reach was reported considerable, with the “Canadian” Global Research outlet averaging over 350,000 readers per article during a three month period in early 2020.

Seeing how disinformation campaigns control the narrative by spreading lies across social media and sometimes even trusted internet news outlets, along with our discovery of the prevalence of sophisticated disinformation-as-a-service providers portends that mere content removal to mitigate a disinformation campaign, especially outside of a social media platform, will quickly no longer become an available option. Blockchain-based biorobots and artificial intelligence operating out of Russia and eastern-Europe are just the latest cyber soldiers of the global psychological war of the information age.

Explore the Products

See why DarkOwl is the Leader in Darknet Data

Products

Services

Use Cases