Threat Assessment in the Digital Age: Analyzing High-Volume Threatening Communication in Far-Right Telegram Channels

November 05, 2025

With increasing regularity, the media is filled with reports of mass shootings, assassinations, political violence, and other forms of targeted violence. While targeted violence is nothing new, our fractured society does appear to be experiencing these events more frequently as time goes on.

One of the ways in which law enforcement, security professionals, and healthcare professionals have sought to combat and prevent these acts of violence is through the practice of threat assessment. A systematic process, built over decades, which seeks to identify and prevent targeted violence through assessment of behavior and managing risk.

However, in an increasingly digital age the sheer volume of data that is available to these professionals is ever growing. Whether monitoring social media for any mentions of credible threats or reviewing large volumes of emails in response to a triggering event or reviewing messaging apps it can be impossible to identify which individuals actually pose a threat and the best way to assist them. This does not even take into consideration the issue of identifying who the real person is behind sometimes anonymous online personas.

This study focuses on high-volume threatening communication within far-right Telegram channels. The far-right is understood here as an umbrella term encompassing a diverse range of ideologies, movements, and political actors situated at the extreme end of the right-wing spectrum. While diverse, these groups usually share some characteristics: nationalism, racism, xenophobia, anti-democratic tendencies, or strong state advocacy (Mudde, 2000). All far-right ideologies, view human inequality as natural and even desirable (Mudde, 2019). Translating definitions of ideology to the online sphere is challenging, since information about individuals or groups is often limited to their digital expressions. As Conway (Conway, 2020) observes, the contemporary online far-right is best understood as a decentralized “scene,” “milieu,” or “ecology” — a fluid and rapidly shifting network of individuals, groups, movements, political parties, and media outlets that overlap and interact in complex ways.

Many of the far-right channels identified by DarkOwl remain active on the platform, which has allowed us to collect a substantial amount of data from the communications within the channels selected for this analysis.

Using a dataset collected from active far-right Telegram channels, DarkOwl and Mind Intelligence Labs sought to examine whether combining AI tools with manual analysis of text-based content from far-right Telegram channels could enhance the identification of threats and deepen understanding of their nature to support threat assessors.

The far-right Telegram channels analyzed in this study contain a high volume of threatening communication, making it challenging to determine which threats are more credible than others. Our analysis shows that most threats are explicit and directed at specific targets. Operationally detailed threats are also common, indicating a normalization of violent rhetoric and a potential for mobilization within these online communities.

What is Threat Assessment

Threat assessment is the process of identifying if individuals may be at risk for engaging in targeted violence and managing that risk to prevent violence from occurring. Assessments are conducted based on an individual’s observable behavior and therefore require a review of how an individual is acting, what they are saying both online and in the “real world,” as well as communications of intent and contextual stressors.

Both the FBI and the Secret Service provide guidance for how to conduct threat assessment, highlighting that it is not just about identifying an initial risk, but ongoing management to prevent any risk that may be posed over time as an individual’s situation changes.

Key components of threat assessment include:

- Identify – Detect behaviors or statements that a person may be moving towards violence. This can include direct threats, planning behaviors, or having a grievance. Bystanders such as friends or family members are often those that report concerning behaviors, but it can also be detectable through online communications that can be tracked.

- Assess – Collect and assess information about the person, what motivates them, what accesses do they have, and what opportunities for violence do they have. Have they shared a specific threat and is this credible and or viable? This can include a review of their online communications as well as interviews with colleagues or family members, and even the subject themselves.

- Manage – A very important aspect of threat assessment is the ongoing management of the risk. This requires developing tailored strategies to reduce the threat. Options can include mental health support, social services, law enforcement involvement, safety planning, and ongoing monitoring and follow up on the subject.

Threats made online differ from those expressed in person since digital platforms provide anonymity, lower inhibitions, and offer wide reach. As noted in the FBI’s Making Prevention a Reality guide (2019), perceived anonymity can reduce typical social restraints, allowing individuals to voice hostility or intimidation they might not display in face-to-face settings. Yet, detecting and evaluating threats that are posted online is important to prevent violence.

Assessing threats in a high-volume environment poses substantial challenges. The sheer number of online communications makes it difficult to distinguish which threats are credible and require further analysis. The FBI emphasizes that not every threatening message indicates a genuine intent to harm. The goal of assessing concerning communications is to determine whether a message is an expression of anger or frustration or a behavioral indicator of movement toward violence. An assessment helps decide which communications warrant deeper investigation or management intervention.

When assessing threats online, several factors must be considered — particularly the specificity, credibility, and intent behind the communication.

A threat is considered specific if it contains concrete information such as who will carry out the act, the intended target, when and where it will occur, and how it is supposed to happen. Specific details — such as the mention of weapons, timing, or location — increase the level of concern because they demonstrate planning or forethought.

Credibility relates to the source of the threat and its feasibility. Analysts evaluate whether the source is reliable or directly connected to the individual of concern, whether similar threats have been made before, and whether there is a consistent pattern of behavior. The assessment also considers how viable the threat is: does the individual have the means, access, or capability to act on their words?

Determining intent involves examining signs of motivation, planning, or commitment to carry out an attack. Indicators may include expressions of grievance, fixation on a target, or evidence of preparation. Establishing intent can be particularly challenging in online environments, where individuals may exaggerate or use violent rhetoric without a genuine plan to act.

Telegram

The messaging app Telegram was founded in 2013 by Pavel Durov who previously founded the popular Russian social media app VK. Telegram has approximately 950 million registered users worldwide. Although a messaging app, Telegram operates more like a social media platform. Users register using a telephone number but can use any display name they want. Users can message each other directly, but the platform also has the concept of channels and groups where mass communication can occur.

In a channel, multiple users can communicate with each other, acting as a chat function you are able to see the username and their comments. Other channels operate more of a broadcast system where only the admins can share messages. Users are able to join channels and are notified of any comments. As well as operating as a communications platform, some of these channels are also used as markets, buying and selling goods such as drugs, counterfeit items and personally identifiable information (PII).

Over the years, Telegram has been used by a wide range of criminal communities. This includes terrorist activity, hacktivism, ransomware, hacking, CSAM, drugs, and the distribution of stolen data. In recent years, it has also become a hub for extremist rhetoric, with groups such as Terrorgram using the platform to promote their views and incite violence among followers. As Telegram’s role in criminal and extremist ecosystems has expanded, Telegram threat intelligence has become increasingly important for analysts and investigators seeking to monitor channels, identify threat actors, and connect Telegram-linked activity to broader online threat environments. At the same time, many other groups – often right-wing – have emerged on the platform, each with different ideological angles and audiences.

Telegram has long been criticized by law enforcement and security analysts for hosting extremist content, CSAM material, and other illicit content. It is renowned for not cooperating with law enforcement. In August 2024 Durov was arrested in Paris for not taking steps to curb the criminal use of Telegram. Since that time, the platform has taken some steps to remove channels reportedly conducting criminal activity, but there does not appear to have been any consistency to this activity.

Methodology

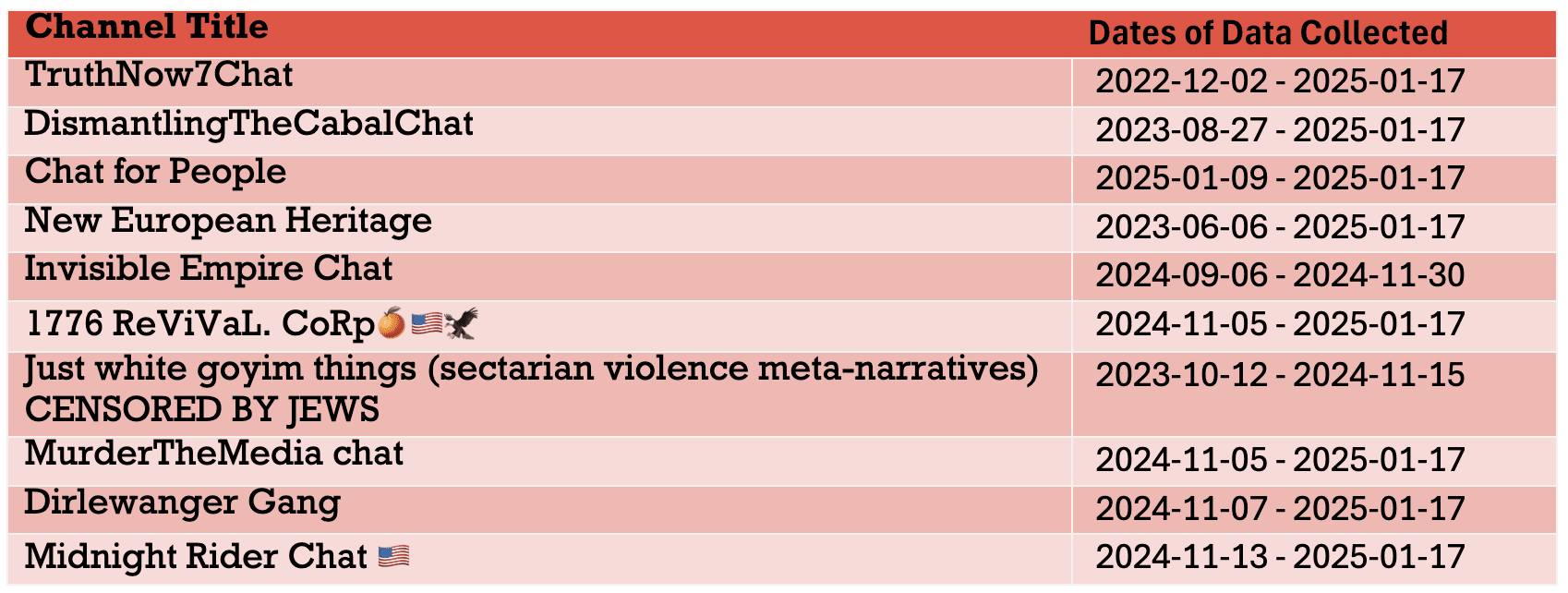

Using DarkOwl’s collection of Telegram channels, analysts identified and reviewed a variety of far-right channels and selected those that had some of the most concerning content from a variety of right-wing movements. Concerning content was defined as those that included mentions of extremist views, violence or appeared to be attacking groups or individuals. Although we classified the channels as far-right, they had a range of ideologies within that belief system, some were explicitly pro-Trump, some were composed almost exclusively of J6 rioters, some were conspiracy theory heavy, others were racist and xenophobic, etc.

Since our focus was on analyzing threatening language, we selected channels that were not overly image based. However, we acknowledge that images and memes constitute an important component of threat analysis. We also prioritized channels that were highly active and had a substantial number of members.

Below is a list of the channels selected and dates for which we had collected data that was analyzed as part of this project.

About the Data

A total of 190,535 messages written by 11,068 individuals was collected from the listed channels. To identify threatening and violent communication within this dataset, we used a set of threat detection tools developed by Mind Intelligence Lab. The tools are based on a machine learning model designed to automatically detect violent threats (Lundmark et al, 2024). Of the 190,535 messages collected, 5% (9,442) contained threatening or violent content. Nearly 4% of the users had posted at least one violent threat. These figures illustrate the exceptionally high volume of threatening communication, which poses significant challenges for threat assessors and law enforcement in determining the severity and credibility of individual threats.

Assessing Threatening and Violent Communication

To better understand the nature of threatening and violent communication, we conducted a qualitative content analysis of a random sample of 749 threatful messages that were automatically identifed using Mind Intelligence Labs tools. Each threat was annotated according to five analytical categories:

- Explicit Target – The message clearly identifies a specific person, group, institution, or location as the target of harm.

Example: “I’m going to make sure Senator James pays for this.” - Operational Details – The author provides information on how violence should be executed (e.g., weapon type, method). Example: “I’m getting my AR-15 to shut them up.”

- Explicit Date or Time – A concrete date or timeframe is given for when the act will occur. Example: “You’ll all see what happens on July 4th.”

- Research on the Target – The writer indicates surveillance, investigation, or personal knowledge about the target. Example: “I know her schedule — she always leaves work at 6 p.m.”

- General Threatening or Hateful Language – Non-specific expressions of hostility, hate, or implied violence. Example: “People like them deserve to suffer.”

Findings

The purpose of our analysis was to examine the extent to which the identified threats contained identifiable targets, operational details, or explicit temporal markers—features that are often indicative of intent, planning, and potential capability. Our findings revealed that 93% of the threats (697 cases) explicitly mentioned a specific target, indicating a strong focus on particular individuals, groups, or institutions. More than 41% of the threats (308 cases) included operational details or descriptions of how the act should be carried out, suggesting a degree of planning and tactical consideration. Only a small fraction, 0.3% (2 cases), contained an explicit date or time for the intended act, indicating that while detailed, most threats did not include a defined timeline for execution. When a timeframe was given, it was vague — for example, “next week” or “by tomorrow.” None of the threats contained information about research conducted on the target.

Nearly 40% of the analyzed threats contained general threatening or hateful language, reflecting a broad spectrum of hostility rather than concrete plans for violence. This category included dehumanizing expressions, where individuals or groups were referred to as “monkeys”, “cockroaches”, or other derogatory terms that strip them of human qualities. Such language serves to justify or normalize aggression by framing the target as less than human — a well-documented precursor to acceptance of violence in both extremist and hate-based contexts.

In addition to dehumanization, many threats expressed violent fantasies or wishes, such as hoping that harm, punishment, or death would befall a specific person or group.

These findings indicate that even when no actionable plans are present, generalized hate and dehumanizing rhetoric can reflect underlying attitudes relevant to risk assessment. Such expressions may foster or normalize an environment in which violence is encouraged, justified, or perceived as acceptable, making this form of language an important factor to consider in both threat assessment and ongoing monitoring of threats.

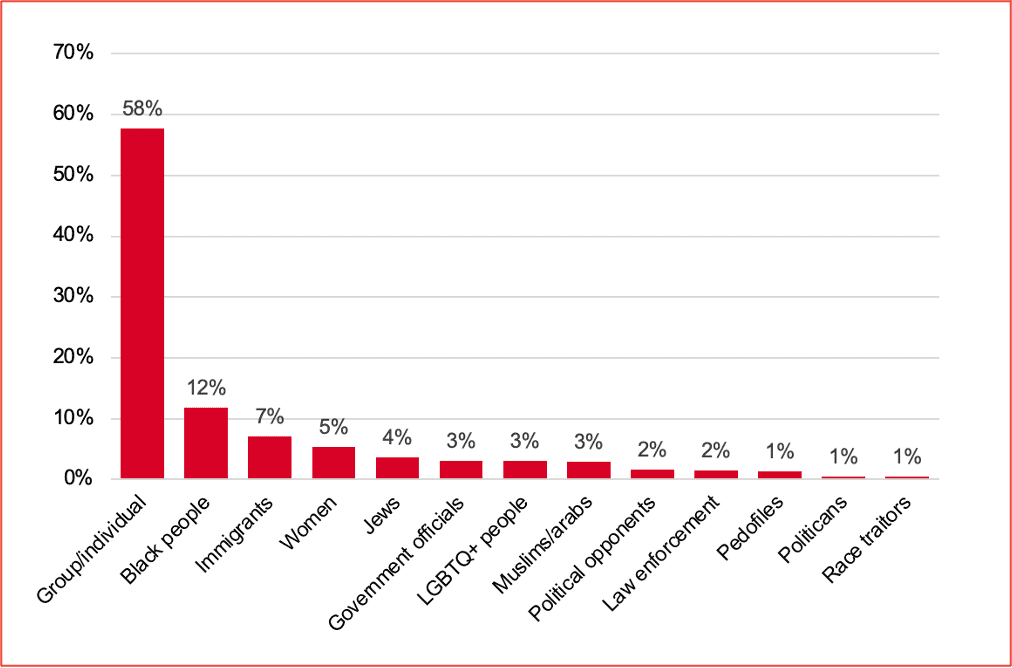

Explicit Targets

Almost all threats (93%) had a explicit target. More than half of the threats (58%) were directed toward unspecified groups or individuals (they/them, he/she or you). These general expressions of aggression often use dehumanizing language and reflect a diffuse sense of grievance rather than a specific intent to harm. However, even non-specific threats serve an important function since they normalize violent discourse and reinforce group identity.

Explicitly racialized threats are highly prevalent. Black people (12%), immigrants (7%), Jews (4%), and Muslims/Arabs (3%) together constitute over one-quarter of all the analyzed threats. This pattern is consistent with far-right narratives centered on nationalism, racism, xenophobia, antisemitism, and anti-Muslim sentiment.

Threats against women (5%) and LGBTQ+ individuals (3%) reflect the intersection of misogyny and anti-LGBTQ+ within far-right telegram channels. Although less frequent, government officials (3%), politicians (1%), law enforcement (2%), and political opponents (2%) represent an important category of threats directed toward institutions of authority. These messages often frame violence as legitimate resistance against a disfunctional or corrupt state. Even though these threats form a smaller proportion of the total, they are of particular concern due to their potential to inspire real-world attacks on public officials or infrastructure.

A small part of the threats targets pedophiles (1%) and “race traitors” (1%). Threats against alleged pedophiles are often framed as a defense of children or morality, providing a pseudo-legitimizing rationale for violence. In contrast, attacks on so-called “race traitors” reflect that a perceived ideological disloyalty within the in-group is punished rhetorically or violently.

Operational Details

More than 41% of the threats included details on how the act should be carried out. References to specific methods offer valuable insight into how far-right actors imagine and express violence. The threats ranged from fantasies of large-scale attacks to symbolic punishments. While many of them may not reflect an immediate ability to act, the repeated calls for violence help to incite and encourage further violent behavior.

Shooting (31%) is the most frequently mentioned method, underscoring the centrality of firearms in far-right violent imagination. Guns are often presented as tools of justice or resistance, reflecting a broader cultural fascination with militarization and armed self-defense. References to specific weapons (e.g., “AR-15,” “rifle,” “sniper”) are common, and their frequency indicates potential access or aspiration toward weapon use.

Hanging (18%) and execution (10%) threats are notable for their symbolic weight. These methods are often framed as public punishment for perceived “traitors,” political opponents, or minority groups. Such imagery mirrors historical lynching narratives and functioning both as intimidation and as a performative assertion of dominance.

Beating (13%) and torture or inflicting pain (8%) represent more personal and intimate forms of violence. These threats often emphasize suffering and humiliation rather than efficiency, indicating a sadistic dimension.

Threats involving burning (5%) and explosives (4%) are less common. Burning is often directed toward symbolic targets such as religious buildings or refugee centers, while explosive threats are associated with aspirations toward large-scale attacks. Although these references are relatively rare, they reflect higher levels of operational imagination and thus represent elevated threat potential.

A smaller part of threats involves stabbing (3%), poisoning (2%), or other forms of methods (2%) such as being hit by vehicles, attacked by animals, drowned, or starved. These methods indicate creative variability in violent expression and sometimes suggest opportunistic or improvised violence.

Mentions of prison or arrest (3%) and deportation (1%) demonstrate how far-right actors also employ state-like punitive language. Such threats often frame violence as an extension of “justice” or legitimate punishment, blurring the line between vigilante violence and imagined authority.

Conclusion

Overall, the threat landscape on far-right Telegram channels is dominated by broadly directed, racially motivated, and ideologically charged hostility. The combination of generalized incitement and specific identity-based targeting suggests a dual function of such communication: maintaining a shared sense of grievance and providing moral justification for violence. Although explicit threats against named individuals are relatively rare, the pervasive use of dehumanizing and violent language toward entire social groups constitutes a persistent incitement environment.

The dominance of operational methods such as shooting, hanging, and beating in the threats shows two key aspects of far-right violent language: it is both militarized and ritualized. Firearms represent strength and control, while hanging and execution reflect ideas of punishment and revenge. Together, they express a worldview that portrays violence as justified and even necessary.

Although many threats lack clear plans for action, their impact should not be overlooked. They normalize violent attitudes, define who is seen as a legitimate target, and create a shared language that can encourage real-world violence.

The mix of modern weapons and old forms of punishment shows how far-right communities combine past and present ideas of violence into a single story of resistance, revenge, and exclusion.

Recommendations

- Monitor high-threat environments: Continuous monitoring of far-right online spaces is essential to detect emerging risks and shifts in rhetoric.

- Identify targeted groups and trends: Mapping which individuals or groups are being targeted, and how these patterns evolve over time, helps in understanding broader threat dynamics.

- Assess credibility carefully: Determining whether a threat is credible is challenging when analysis is limited to digital communication. Online expressions may range from symbolic aggression to genuine intent.

- Address incitement and inspiration: Even when individuals do not act directly, exposure to violent rhetoric and extremist narratives can inspire others to commit acts of violence. Efforts should therefore focus not only on explicit threats but also on messages that glorify or encourage violence.

Questions? Contact Us.

References

Conway, M. (2020). Routing the extreme right: challenges for social media platforms. The RUSI Journal, 165(1), 108-113.

Federal Bureau of Investigation. (2019). Making prevention a reality: Identifying, assessing, and managing the threat of targeted attacks. U.S. Department of Justice. https://www.fbi.gov/file-repository/reports-and-publications/making-prevention-a-reality.pdf/view

Lundmark, L., Kaati, L. & Shrestha, A. (2024). Visions of Violence: Threatful Communication in Incel Communities. In: 2024 IEEE International Conference on Big Data (BigData): pp. 2772-2778.

Mudde, C. (2000). ‘The Ideology of the Extreme Right’, Oxford University Press.

Mudde, C. (2019). ‘The Far Right Today’, John Wiley & Sons.

Explore the Products

See why DarkOwl is the Leader in Darknet Data

Products

Services

Use Cases