[Webinar Transcription] New Regulations and What They Mean for Your Supply Chain

October 07, 2025

Or, watch on YouTube

This fireside chat, “New Regulations and What They Mean for Your Supply Chain,” features legal expert Rich Hanstock and DarkOwl’s Lindsay Whyte as they unpack the evolving cybersecurity regulatory landscape across the UK and EU. The discussion explores the shift toward mandatory, continuous, and ecosystem-based compliance, highlighting key regulations such as the EU Cyber Resilience Act, NIS2 Directive, and the UK’s Cyber Security and Resilience Bill. With increasing supply chain complexity and heightened accountability, the speakers examine how organizations can proactively manage risk, leverage threat intelligence, and prepare for upcoming compliance deadlines—all while navigating the broader implications for cybersecurity professionals and industry resilience.

NOTE: Some content has been edited for length and clarity.

Kathy: And now I’d like to turn it over to Lindsay, a Regional Director for DarkOwl and Rich Handstock, Barrister and founder of pwn.legal to introduce themselves and start our discussion.

Lindsay: Thanks very much, Kathy. The aim of today’s session is to shed some light on the regulatory landscape as it relates to cybersecurity practices in the UK and Europe. And obviously from DarkOwl’s perspective, we’re always keen to share how our technology and ever-evolving collection approach meets these regulations. But today, it’s important to spend a bit of time setting the scene, I think, and stepping back a little, because there’s a few things at play here which affect many more professionals than just those involved in DarkInt collection and threat intelligence.

So perhaps, Rich, I can start by asking you as a specialist, legal professional in the world of cybersecurity and data privacy. What is the regulatory landscape right now with regards to cyber resilience?

Rich: Thanks, Lindsay. I think it’s quite an exciting time to be talking about this. Jurisdictions around the world, it seems to me, are converging around this idea of the challenges and risks of cybersecurity being shared, rather than seeing responsibility concentrated in states or in a few larger kind of critical infrastructure type organizations. Take CrowdStrike, for example, events like that surface into the popular imagination, the kind of sheer extent of hidden dependency on technology, many of which are not readily understood by or foreseeable to the average person and the systems we’re seeing vulnerabilities in ways that are not necessarily well understood either. But what is understood is that when it goes wrong, even for one company, even if that company isn’t currently a household name, that incident can have ramifications for a vast number of people outside that one organization. And so, if that keeps happening, and I think we have to assume that it will, that has the potential to erode the sense of security that many of us at least are fortunate to depend upon. Some of us maybe take for granted. And when that happens at scale, it can become a national security issue. But the challenge is just so huge. And fundamentally, I think governments are realizing that the cybersecurity challenge is too big, too great, too rapidly evolving for states alone to solve.

So, for the last few years in the kind of cyber policy space there’s been a discussion around what’s been termed a ‘whole-of-society’ approach to cyber security and this idea that partnerships not just between states and those key kind of private sector organizations that are deeply embedded in kind of infrastructure of the internet and so on, but critically between cooperation within the private sector, between and across markets and sectors and jurisdictions, with the focus really being now on assuring business continuity, data security and integrity, so as to project confidence to end users and to other businesses that everyone’s working together to help to keep the lights on globally.

So, to answer your question, I think it’s the recognition in policy of a need for that whole of society, everyone working together in partnership approach to cybersecurity that is driving this kind of shift in the regulations towards focus on the supply chain, ensuring private sector organizations of all shapes and sizes are taking the threat seriously, not just to their own backyard, but looking outward to their dependencies in their supply chain as well. There’s a sense, I think, that regulators need to have the power to ensure that more organizations are thinking about business continuity and security with ever broader responsibilities and so on. But it’s all about enhancing our collective security.

Lindsay: Yes, I see what you mean. And what are you saying then is the sort of general direction of travel on that basis then?

Rich: Again, it’s broadly the same idea, right? More accountability for cybersecurity throughout what are life cycles and throughout the supply chain. Whilst there is alignment around that central idea, national implementation is creating complexity for multinationals. And I think that there are effectively three kinds of big handful, big three, three big key shifts that I want to talk about.

First of all, we’ve got the shift from voluntary standards to kind of mandatory standards, at least for those who are in scope. Historically, cybersecurity standards have been kind of largely self-regulated. You can get ISO certified, adopt various frameworks, get your cyber essentials and so on. All of its really good practice. Sometimes you see those kinds of certifications as being conditional upon kind of eligibility for a contract. It’s kind of a compliance requirement. But fundamentally, they’re voluntary. And what we’re seeing now is regulators kind of saying, well, if you’re in scope of our regulatory powers, that’s not going to be enough. You need to have these as kind of a minimum baseline. And that’s why we’re seeing kind of legal duties of care being put on kind of manufacturers and operators, as well as just the critical infrastructure providers. That’s shift one, voluntary to mandatory.

The second shift is a move from point in time security and assurance, to more continuous monitoring and assurance, which is kind of linked to the first point. It’s not performative, or supposedly, it’s not just performative. You need to be taking this kind of focus on effectiveness and outcomes rather than just ticking boxes. So, for example, under the CRA, the EU’s Cyber Resilience Act, you don’t just certify a product is secure when you launch it, you’ve got ongoing obligations throughout its life cycle. So, if three years after release of vulnerability emerges, and it’s being exported, and you become aware of that, you’ve got specific notification timelines that are pretty sporty, actually, to the relevant authorities. And that fundamentally changes what compliance looks and feels like inside an organization. It’s not just okay, we’ve got a stiff cut on the wall, big tick. It’s a continuous operational responsibility. That means that you have to understand the threat environment as it evolves. So, voluntary to mandatory, point in time to continuous.

Thirdly, from perimeter thinking to more ecosystem thinking. It’s this idea that traditionally compliance is focused on your backyard, within your fence, your organization’s security. These new regulations effectively make you responsible to an extent for understanding your own supplier’s security. And in some cases, your supplier’s suppliers, this kind of idea of nth-party security, where does it stop, you’re now accountable for risks that you might not even have visibility into at the moment. There’s kind of a question about underwiring your ability to discharge your own responsibility by getting insight into what your suppliers are doing. That’s part of the challenge in effect. And critically, the penalties of getting bigger and sharper teeth, you know, like 15 million euros, two and a half percent of turnover for CRA, that really changes the conversation in the boardroom. And we’ll hopefully empower CISOs and certain people who are responsible for compliance in this space to be stepped up and listened to and maybe have more budget than typically they’ve had previously.

Lindsay: That’s such a good point because there are now just these endless strings of supply chains in this day and age. Why do you think these changes are happening then?

Rich: Well, I think primarily it’s the instance that I mentioned. We can list them off all day, SolarWinds, CrowdStrike, JLR, MLS, these weren’t necessarily isolated attacks on single companies in terms of the way that they were, that the impacts were felt, these were supply chain compromises that kind of cascaded across many people, many different organizations and I think we have seen regulators watching companies with quite sophisticated security programs getting breached because of vulnerabilities in third party software that they maybe didn’t have any or enough visibility into. That goes back to the point I made a moment ago about perimeter-based security, just not really working when the threat enters through your supply chain. We’ve also got because of that kind of cascading effect, the kind of sense of market failure, where cybersecurity incidents are what economists might term a negative externality, right? When a product is insecure, that it’s not the manufacturer alone that bears all the cost, customers suffer the impacts of breaches, critical infrastructure is disrupted, but the manufacturer’s liability might not necessarily capture what is regarded by the sort of person on the street as kind of being fair. The idea is that if markets aren’t naturally optimizing for security because of the externalization of some of the cost, regulation; there’s a case for regulation stepping in to correct that market failure. I think regulators are trying to use the law to internalize those costs to kind of make manufacturers and operators bare the true cost of insecurity.

Which actually leads me onto another important point, which I think is often overlooked in this space, which is the insurance market. This has been a lot of conversation about this, around the JLR incident. Insurers, I think, have historically struggled to price risk effectively to understand the risk because there’s no kind of standardized way to assess security practices across supply chains and I think without that without baseline security standards there’s a risk that the risk transfer mechanism it kind of breaks down. So, look at the discussion around insurance up to JLR, would it even, would the insurance that JLR was criticized for not having picked up even have been sufficient? Maybe not, right? And to the extent that that reflects a gap in the market, I think we’re going to see the insurance market mature, partly as a consequence of these regulations, partly as a result of the incident and the discussion that is now going on about it.

It’s not just about preventing breaches is my point. I think these regulations are also about creating more predictable risk environments so that insurance markets, ultimately capital markets, can function more effectively. So, without that predictability or that ability to understand what’s going on in the supply chain, where the dependencies are, where the vulnerabilities are, there’s a risk that the digital economy is more unstable than we would like it to be.

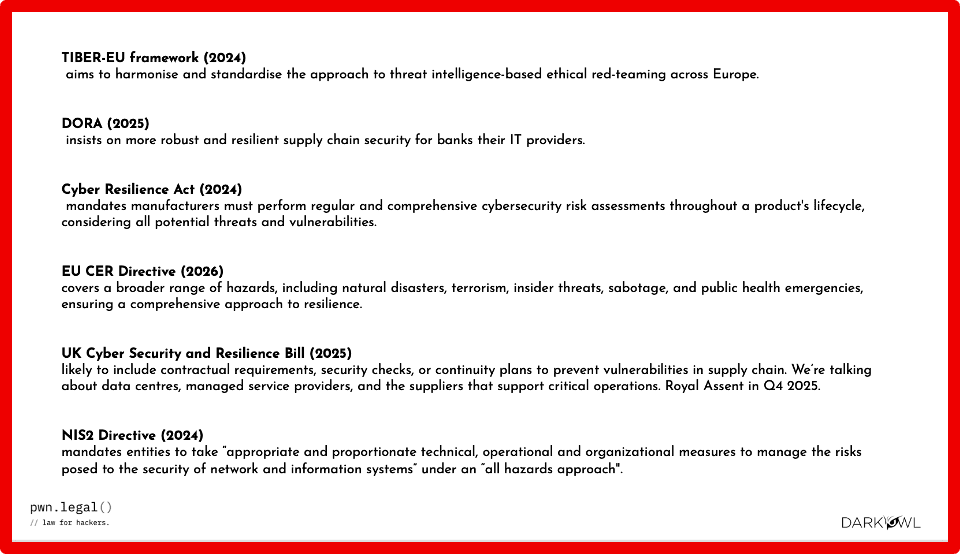

Lindsay: And on that question of the new regulations, could you talk a little bit more about what they are saying?

Rich: Sure. I mean, there’s a lot of them. I know you’ve– I think we’ve got a slide. If you could call that up, that’d be great. I’m not going to try and cover all the detail now, but I think there are kind of two or three main tracks.

We’ve got the EU Cyber Resilience Act, which came into force in December last year. The reporting obligations kick in in September 26th, full compliance by the end of ’27. NIS2 alongside that came into effect in 2023, which that was really about expanding critical infrastructure obligations. And a lot of the conversation in the UK now around the Cyber Security Resilience Bill is about extending original NIS regulations to managed service providers, bringing a big chunk of the supply chain into the scope for the first time. We’ve talked at the beginning about the big handful shifts, and we’re drilling down now into some more of the regulations and what it is that they actually say.

I think Question Zero the clients always ask is, am I in scope? I know scope is expanding, there’s a lot of talk about the fact that scope is expanding and the greater burden that therefore imposes on people. That’s obviously an interesting and important feature of these regulations, but it tends to drive the conversation around, while there are some new regulations coming, how do I avoid them or minimize my exposure to them? Obviously, that’s important to understand it, but I always advise clients that the conversation doesn’t stop there. So, kind of my prediction is that the requirements that each of these frameworks bring will over time become market norms, to the extent that we could see those requirements invoked by analogy, like in private litigation, even those who are outside the scope of regulatory jurisdiction. So, if you have an obligation, say in a contract, to take reasonable steps or perform due diligence, I think we’re going to see a failure to take steps that in some sectors are required by regulators, potentially being deployed against people who are otherwise out of scope in litigation or at least in negotiation around a commercial contract or following an incident. I think it makes sense that whether you’re in scope or not to understand what more you can do to understand your exposure to risk.

Big handfuls, if in the EU manufactured importers, you’re looking at the Cyber Resilience Act, so if you’re making or importing products with digital elements into the EU, so that could be software, IoT devices, anything connected, you’re going to have obligations at sort of three main stages. Before the market, you’re looking at being able to demonstrate security by design in software and hardware, risk assessments, documentation, so on. At market, the things like CE marking, conformity assessments, kind of build on the pre-market stuff. You’re looking at creating what’s called software builds of materials, or SBOMs, in a particular, format that need to be given to regulators on request to goagain, to show that you understand where the dependencies are in your software.

Then the big shift is throughout the lifecycle of the product, right? You’ve got a monitor for vulnerabilities in your product throughout a support period, usually five years. And if you become aware of a vulnerability that’s actively exploited, maybe through responsible disclosure or otherwise. You’ve got to notify National Authorities and ENISA within 24 hours, a detailed report within 72 hours, final report 14 days. This is pretty quick in the context of an incident, right? And critically becoming aware can include constructive knowledge. So, if it’s publicly available, you could be deemed to know. So, you need to be monitoring what’s going on, have a vulnerability disclosure policy and be engaging responsibly with those who make those responsible exposures, that’s a bit of a bugbear of mine.

NIS2 then, if you’re kind of an essential or important operator in say energy, transport, banking, health, infrastructure, those kinds of sectors, in the UK this is expanding to MSSPs, you’re going to have similar kind of key obligations around risk management, including understanding your supply chain and your exposure to risk there. Again, incident reporting, early warning within 24 hours, 72 hours, detailed notification, final report within a month. And so, you can see like an integration point here where if you’re an operator within this too, you’ve probably got to verify your supply is compliant to the CRA.

So effectively, what we’re seeing is a cascading accountability. So, you can’t just take your vendors at their word, you know, you just get a warranty that says, “Oh yeah, we comply with all of this stuff “and it’s all fine.” You actually need ongoing visibility into their security posture as well as your own. And it makes sense as well to make sure that you have the contractual levers, but critically the relationships in which those levers might be pulled to ensure that you’ve got the right information available to you and to demonstrate that you have the right information if a regulator comes knocking, as well as the competence to interpret that information. So, this is about investing in relationships, contracts, people, so that you can ensure that you’re able to assure a regulator or a supplier that you have the visibility that you need into your organization, but also those on which you depend. It’s really, really quite broad.

Lindsay: Yeah, and I guess, bringing these two subjects together, you know, taking that spider’s web of supply chain now, can companies in your opinion rely on, you know, the government for all matters relating to threat intelligence, you know, is it sufficient to rely on the government and, you know, government punishments and that sort of thing to prevent threats in future?

Rich: No, so I think the short answer is no. Why threat intelligence with government on its own isn’t enough and I think that is by design, going back to my point earlier about kind of reducing perceived dependence on government to mitigate these risks. And I think effectively the regulations are structured to make sure that you are taking responsibility for your own security, your own company’s security, as well as that of your supply chain, and to make that make commercial sense. That’s the point about regulation to correct perceived market failure. From a big broad policy perspective, I think that reflects a broader global shift in thinking about commercial resilience as a component of national security, in which we all play a part, right?

So again, come back to JLR. People are asking now, what is the proper role of the state when an incident hits, right? Kind of like the conversation we were having a few years ago, about banks and fraud, right? Who should bear the cost of a hostile act? and what protective measures need to be in place to then fail and how severe do the impacts need to be before somebody other than the victim, typically in that dynamic, a consumer, intervenes or maybe even the state intervenes to kind of swaddle or mitigate the loss.

And my sense is that these regulations are kind of the beginning of a clarification of what the role of the state is in a cyber-attack, a cyber incident, like it’s more about the state setting standards and enforcing them, but giving advice about how to meet those standards without providing the kind of operational security service at scale for individual companies or people in the supply chain. That’s everyone’s responsibility, not just the state. It’s that whole of society approach again, right? And again, so states can help with things like quality assurance to a credit, cyber security solution providers. They can help with setting what cyber essential should be, but that sort of thing. But the day-to-day security, your backyard and your competitors, that’s on you, that’s the clear message. And you can kind of see that as and when a critical mass kind of adopt that mindset to the extent that that hasn’t already happened whether compelled through regulation or voluntarily, the idea is we should be more secure because there is this natural surveillance within the market around threats, but that doesn’t mean you can out source it, you need to be looking at our own and those on who your continuity depends.

Specifically on threat intelligence, I think government threat intelligence is clearly invaluable. The NCSC in the UK, CISA in the US, ENISA in the EU, it tends to provide the quite strategic contextual information about kind of nation-state level threats, because that’s naturally where the focus is, kind of big vulnerability disclosures, maybe some sector-specific guidance. But because it’s operating at that macro level, it’s not enough on its own, that’s why I say it is not enough, because you look at the what the CRA and NIS2 require you to be looking for; they are requiring you to monitor for threats that are really quite specific to your products and your supply chain and that means effectively if your components have got vulnerabilities, if your credentials are circulating on criminal forums, if your employees or contractors or suppliers are vulnerable or being targeted that kind of granular operational intelligence is on you to collect and understand and interpret and assess. Government just can’t provide that kind of granularity as a service to all industries all of the time. They don’t know your specific bit of material, your supplier relationships, your attack surface. And there’s always a bit of a lag, right between the filtering down of government intelligence to, to public advisories, right, by which time the pace at which the threat is evolving, especially with AI and so on, its attackers have probably moved on a little bit. I think that’s always part of the challenge with public advisories. And I think governments accept this, right.

We’re seeing regulatory guidance that explicitly encourages companies to use commercial threat intelligence, right? Look at the British lawyer, look at the NCSC, for example. The NCSC’s guidance on supply chain security recommends continuous monitoring using multiple intelligence sources and seeks to equip companies to kind of understand what the market is offering in the threat intelligence space and the continuous monitoring space in order to make an informed choice for their organization as between what could be quite expensive, in some cases, and quite technical, different products. So yeah, that I think is the role of government. It’s helping you to make choices, but it’s the making of those choices that you still need to do in order to get the information that you need. So, if you’re relying solely on government threat intelligence, you’re probably not going to satisfy the appropriate procedures standard in the regulations. You need to be demonstrating proactive continuous monitoring, tailored to your risk profile. Loads of vendors do that, some are better than others.

I think fundamentally these regulations are trying to align compliance incentives with actual security outcomes. The idea is that we move away from this box ticking compliance much more towards actually improving your resilience to cyber attack, which is kind of in your commercially interest anyway, right? But it’s also about making sure that you can demonstrate if you’re audited or if a supplier comes knocking that you are doing all the right things, as well as actually using the intelligence in the right way.

Anyway, I’m conscious I’ve been talking for quite a long time, and I’ve got a few questions for you, Lindsay, if I may, about your experience at DarkOwl. So, reflecting on your experience with your clients, people who use your products, what kind of common challenges are they facing? Why is supply chain security important to them?

Lindsay: There’s a few reasons. I think one is best explained by the way that cloud technology is creating what could be described as a logarithmic network effect, the sort of spider’s web that I described earlier, where the ease of integrations between technology, which is a brilliant thing, causes an enormous reliance on external parties and risks from the supply chain. I mean, as you mentioned earlier, and I think it’s worth repeating. We know that last month that the CEO of Sophos, an enormous European cybersecurity company, Joe Levy, he summed it up nicely by saying that third party risk management is now “Nth Party Risk Management” that deserves being repeated, given the endless string of supplies involved in the provision of a product or a service.

And it’s not just B2C end products. Most B2B products on the infrastructure level now can’t escape a world of interdependence and over reliance on suppliers. We all thought data centers were that sort of the end of the supply chain thread where risks are more controllable from a sort of compliance perspective, etc. But you just have to look at the recent events with underwater sea cables in the Red Sea to realize that no one is safe.

And I think another big issue is the diversity of regulations as they relate to supply risk. If your supply chain is getting longer, so too is the certainty that some of those supplies are based in a different jurisdiction to your companies, and they’re probably more focused on that jurisdiction too from a compliance perspective. So, one of the regulations that you mentioned, the UK Cybersecurity and Resilience Bill, you know, that extends the current network and information security systems regulations to cover more ground, like you mentioned, managed service providers. And that is an enormous chunk of the cybersecurity supply chain for almost any sized company. So, not only are you contending with different suppliers, more supplies, but also, different countries and approaches to regulations in which those supplies operate.

Rich: Yeah, so thinking about those security professionals’ jobs, how are they impacted day-to-day by these regulations?

Lindsay: Yeah, I think that’s probably why we talk about people specifically working in the roles within threat intelligence and allied professions. If you take a look at the micro level, there are so many things to talk about even just within that category.

There’s a lot of things to consider. There’s the onboarding of third parties and all the checks that that entails. For example, you have within the lifetime of a third-party contract, the ongoing maintenance and technical debt, there’s the offboarding, the decommissioning phase, often done so often with less sort of support from cross functional teams who just want to get rid of the contract they have with this supplier.

There’s the ever-evolving world of application security too. But then, what about the consultants, in a world of outsourced services and staffing? You know, the people who have been working on this technology or so, have they got key cards on them? Then there’s that added issue of maybe areas that are blended with corporate security responsibilities. You need to account for those and stepping back even further. This is all in a world in which an information security professional probably doesn’t actually own the supplier relationship or even project manage the deployment. So, looking at all of these variables on both the job level for people working in security through to this macro level of nation state threats as you mentioned quite rightly in the complex and independent supplier applications and networks. It’s no surprise that some of the prevailing guidance is about how to take matters into your own hands as you ended with, because we can’t readily rely on the government to sort it out for us.

Rich: Yeah, I mean, there’s the inflection point right between what we’ve been talking about. New technologies and sort of big data, there’s more data out there swimming around, there’s got to be an opportunity there, right, to better understand these threats?

Lindsay: Yeah. We should probably talk about something positive, I think in all of this, because no doubt we’re all affected by the speed with which data can be crawled and fused in the threat intelligence sector. And yes, the explosion in supply chains means that there’s more ways for threat actors to get lucky, you know, business email compromise, service desk social engineering and beyond, you know, there’s a broader attack surface, meaning there’s need for more threat intelligence. And I think thankfully, there’s now a renewed attention you’re starting to see on threat intelligence and open-source intelligence that encapsulates everything from APT group reconnaissance to Twitter feeds and ways that we confuse this normalized data to give warning signals to information security pros. Alert fatigue is a problem especially, you know the moment you introduce responsibilities to monitor the supply chain and the wider regulatory sort of consequences for doing so the answer will inevitably lie in looking over the horizon, looking over the IT network and strategically addressing the issue rather than tactically. And technology can certainly help us do that.

Rich: What a neat segue into DarkOwl. So how has DarkOwl helped to equip information security professionals and others to navigate that increasingly complex environment and think differently about how it and get ahead of those risks?

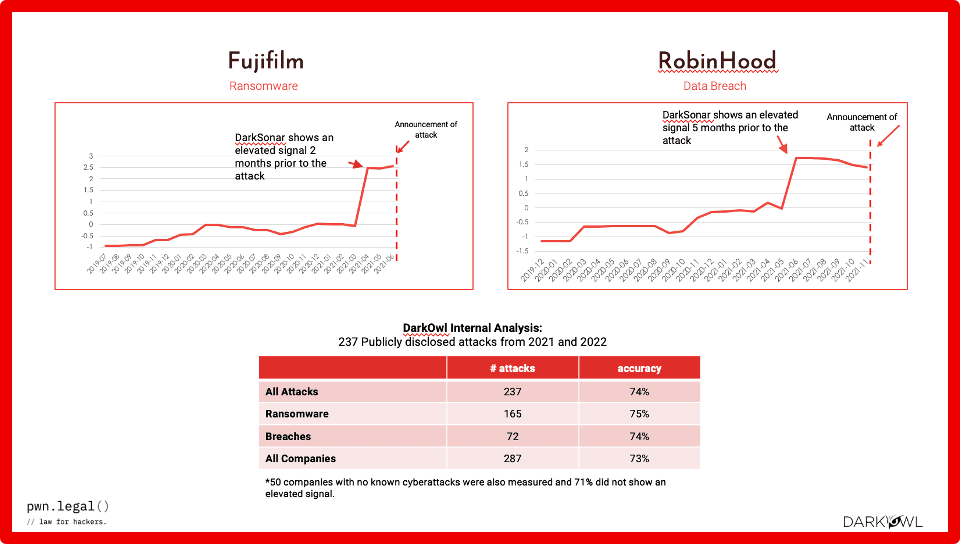

Lindsay: Yeah, when we were sort of thinking about this, we developed something called DarkSonar. So, DarkSonar is a risk score. So that when there’s been more activity surrounding a company’s domain and staff credentials on the darknet, essentially it would let you know when there has been more exposed than you’d normally expect. Breaking this apart a little bit, it gives a relative risk rating to an email domain that considers the nature, the extent and severity of credential leakage on the darknet to provide a company with a signal that acts as a measurement for a company’s exposure in advance of an attack. Because we know that one of the biggest threat vectors to this day is still compromised credentials for entry to a system. And we tested this metric against 237 cyber-attacks occurring between 2021 and 2022 and found our signal was elevated within the last four months as it says there, a prior to an attack for 74% of the attacks on organizations. And I suppose there’s three things going on here.

So, number one, it’s data-driven future warnings as opposed to alerts after the fact. Number two, it’s scalable to all domains and supplies included. In fact, Security Scorecard have endorsed this for us publicly for this reason. And then finally, it offers companies the ability to measure market benchmarks because their supplier may not know where their exposure lies in relation to other companies, especially in relation to government departments and local councils, for example, that sit side by side, but are actually not always sure as to what exposure level they should come to expect, especially if you can benchmark it against predicting breaches and ransomware attacks.

So, this is the way for you and your supplier to do just that. It’s one contribution we’re making to at scale help organizations look over the horizon at risks to their suppliers and by extension themselves.

Rich: That predictive model sounds really critical for businesses that are trying to get ahead of a threat, right? And/or if you want to criticize someone else in your supply chain who didn’t get ahead of it. I was just going to say, did you have some slides that kind of demonstrated that?

Lindsay: Yeah, so if we look at a couple of examples that we threw together of sort of brand names just to make the data pop a little bit, you can sort of just visually sort of evaluate the success of using this sort of metric to predict an oncoming attack, just looking at Fujifilm and Robin Hood and their ransomware and data breaches that they experienced, respectively.

So yeah, I mean, this is something that we’re working on, we’re always looking for people to try it, to test it out. We like to be very transparent and understand where people are in their journey. This industry only works, threat intelligence only works if, you know, information flows both ways and we can certainly benefit from that. So, I mean, talking of that, I mean, perhaps we can turn to some questions from the floor because we’ve both been talking enough now.

So, Kathy, I don’t know if any questions have come in, but we can maybe answer any in case.

Kathy: Yes, thanks, Lindsay. We have one question that came in. It is, your webinar is looking at future trends, but from existing commercial customers, is DarkOwl seeing any trends today and how to leverage the darknet?

Lindsay: Good question. It’s funny because I was reading the UK government’s chronic risk report that was brought out last month and inside it, it details the ways in which so many risks are converging. So, I mean, the report itself was consistently emphasizing the interdependence of cyber risks, geopolitical risk, economic risk, even ecological risks. And one of the long-term uncertainties they officially were outlining is that the internet is going to become fragmented into sort of splinter nets, which basically means that, you know, the internet will fragment, thanks to regional policies, which will sort of isolate digital interactions and data access, creating sort of digital islands. And when you add that sort of thought to, okay, at the same time in the UK, we’ve got a, you know, explosion of VPN adoption since the online safety act and the sort of the risk we’re re-anonymizing the internet. Really, what that all means is a big trend we’re hearing from customers and partners is that they’re finally treating the darknet as an online space, just like the rest of the internet, which is needed for brand protection, situational awareness, just as importantly as they’re using the surface net for those same purposes.

Kathy: Okay. Thank you, Lindsay. We have another question that has come in: We are a mid-sized manufacturer, December 2026 feels close for CRA reporting obligations. What should we be doing now?

Rich: Yeah, it is close. I tend to advise my clients to kind of phase their preparation over the next 12 to 18 months if they haven’t started already. Their objectives really need to be to getting and making sure they’ve got the right people on it, first of all, sort of the right consultants, lawyers, to help to ensure preparedness. And the first thing to do, I suggest, really is to map the supply chain, get visibility into what components you’re using, who your critical suppliers are, what your relationships look like, what contractual and commercial levers you’ve got to get information about their exposure to risk. Where you have a gap? How do you fill it? Do you need to buy threat intelligence? Do you need to buy access to data? That kind of thing, then you assess your vulnerability monitoring. Can you at the moment detect when your components have actively exploited vulnerabilities? Are you researching vulnerabilities entering software yourself. If not, I suggest certainly the former is quite a serious gap. Start looking at continuous monitoring solutions, get some quotes, start integrating. Then if you’re not already generating SBOMs, the builds and materials start building or procuring that capability, because that’s again foundational to compliance.

And then once all that’s in place, we need to look at incident notification planning. So, running tabletops, what does it look like in practice to meet that kind of 24-hour notification timeline as the case may be, who needs to be involved, who calls whom, at what point, who makes the decisions. And these could be quite big decisions, right? Like, do we pay a ransom? Like, whose job is it to decide and what’s recorded. Where is it recorded? Probably not on a compromised system, right? Whose job is it to record everything? And then test them, test the response procedures, document them, improve them, test them again. And it’s kind of acontinuous cycle.

What else? Reviewing supplier contracts, you probably need or will be asked to give kind of CRA specific warranties and indemnities, Make sure they’re fair and that there isn’t this kind of knee jerk, complete and utter transfer of risk onto you. Think as well about on the topic of risk transfer, think about insurance. Whilst I think I’ve said that the market is still maturing, if you’re not insuring, make sure the risk is at least surfaced and noted at the correct level. I think the companies that struggle in 2026, 2027 will be those who kind of see this as a bit of a last-minute compliance exercise trying to buy their way into like a performative compliance at the last second. Like not acting now I suggest is also a decision and you should think about where the accountability for that decision might lie and if you don’t know where that is, it’s probably you. The companies that are succeeding in in 2027 will be those who have embedded security monitoring, continuity planning, and so on into their operations. Now, easier said than done, right? It needs investment and time and money and people, but that’s the way of the world.

Kathy: Great. Thank you, Rich. Both Lindsay and Rich, that looks like that’s the last of our questions, and I just want to thank the both of you for an insightful discussion today.

And as a reminder to all of our attendees, we will be following up via email with a link to the recording and other resources. If you’d like to contact either Lindsay or Rich, their contact information is presented on this slide. And we thank you again, and we look forward to seeing you at another webinar in the future.

Questions? Contact us.

Explore the Products

See why DarkOwl is the Leader in Darknet Data

Products

Services

Use Cases